StreamOwl was selected in the 3rd Open Call for Experiments of the COVR Project (Grant agreement ID: 779966) to develop solutions for the safe operation of Autonomous Mobile Robots (AMRs) in shared and coolaborative workspaces. See more details about the Open Call at https://www.safearoundrobots.com

The transportation of materials within facilities is still a great challenge in dynamic environments in logistics and manufacturing facilities. Nowadays, workers need to leave their stations to push carts between manufacturing processes and the stockroom, which results in production backlogs and idle workers. Traditional automated guided vehicles move using fixed routes guided by permanent wires or sensors in the floor. However, those systems are inflexible, expensive, unsafe for the employees, and disruptive for non-static environments. The main reason that this process is not automated yet is that the facility layout is often dynamic, with people moving around, and obstacles without a fixed position. Therefore, an automated material transportation approach must be flexible and adaptable without additional cost or disruption to processes, and, most importantly, safe for operation around employees.

The Realistic Trial addresses the safe transportation of materials by AMRs in dynamic environments within facilities. The main difficulty in the navigation of AMRs is the facility layout is often dynamic, with people moving around, and obstacles without a fixed position. Therefore, an automated material transportation approach must be flexible and adaptable without additional cost or disruption to processes, and, most importantly, safe for operation around employees.

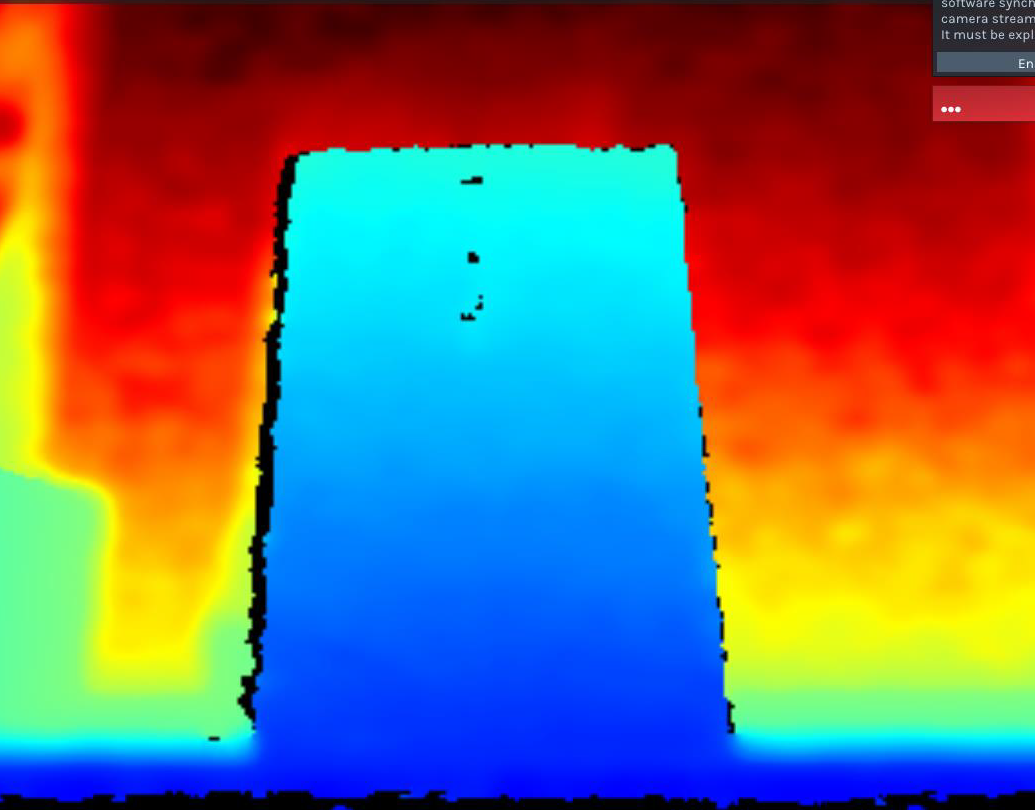

“Challenge 1” of the realistic trial aims at improving, validating and quantifying the ability of the AMR, that has been developed by StreamOwl, to maintain a safe distance with respect to an object (human/fixed-object) placed on its path. The challenge is to use the integrated lidar and 3D camera of the AMR to ensure that the robot stops at a pre-defined distance before the object/human in its path. The Realistic Trial includes the protocol validation and the identification of parameters/factors that may affect the ability of the AMR to maintain a safety distance from and an object on its path. In this challenge, we have evaluated factors such as the payload mass, vehicle velocity, and object/obstacle dimensions. The experiment in the project includes the validation using the protocol “Test mobile platform to maintain a separation distance – MOB-MSD-1. We have collected measurement data from the integrated peripheral sensors on the AMR using different scenarios, e.g., loaded/unloaded AMR, different pairs of velocity/load, different dimensions of object/human, and angle between object and vehicle direction. The measurement data from the peripheral sensors includes the distance from the object as computed by the 3D Intel RealSense D435i camera and the distance from the object as computed by the Garmin Lidar-Lite V3HP lidar on the AMR.

“Challenge 2” of the realistic trial aims at improving, validating and quantifing the ability of the AMR, that has been developed by StreamOwl, to limits the psysical energy of the AMR so that it keeps the applied force and pressure below specified thresholds. The challenge is to use the integrated software and hardware equipment of the AMR to ensure that the robot is safe to work in a collaborative space. The Realistic Trial includes the protocol validation and the identification of parameters/factors that may affect the ability of the AMR to limit its physical energy and enable a person that has been clamped to be released. In this challenge, we have evaluated factors such as the payload mass, vehicle velocity, and body part of the endangered human operator. The experiment in the project includes the validation using the protocol ” Collision Test for mobile unit with fixed object- XD-MOB-LIE-1”. The measurement data from the load cell measurement device enable the measurement of the applied force over time, which provides information about the maximum applied force and the duration of the applied force. The AMR always demonstrated the ability to keep the applied force below the specified thresholds. The applied force increases, as expected when the payload and the speed of the AMR increase, but they are always kept below the safety thresholds.